Be Prepared; Rankings and Traffic Will Fluctuate

It’s worth mentioning that anytime a significant algorithm update occurs, webmasters should expect any previous rankings for broad, generic keywords to decline and in some cases disappear for good.

Why do rankings flucuate?

It’s largely due to the fact that a large search engine, like Google, has enough user data and feedback to adjust what results (domains) get displayed organically when users type in keywords that inherently have a broad search intent (1) . At some point, Google has basically determined that a majority of users are looking for X, not Y.

If you’re a big brand with hundreds of URLs in Google’s index, you’re X. Congratulations, your site likely has enough authority AND did a good enough of a job at matching the intent of a broad search term.

If, however, your site has significantly fewer pages, you’re Y and you’re going to get muscled out of page one. Sorry.

It’s not a coin flip. It has more to do with constantly trying to understand user intent. The user types in “apple.” Google’s algorithm has evolved to understand that the majority of people are searching for information on the company, Apple, not the fruit.

How do we know this?

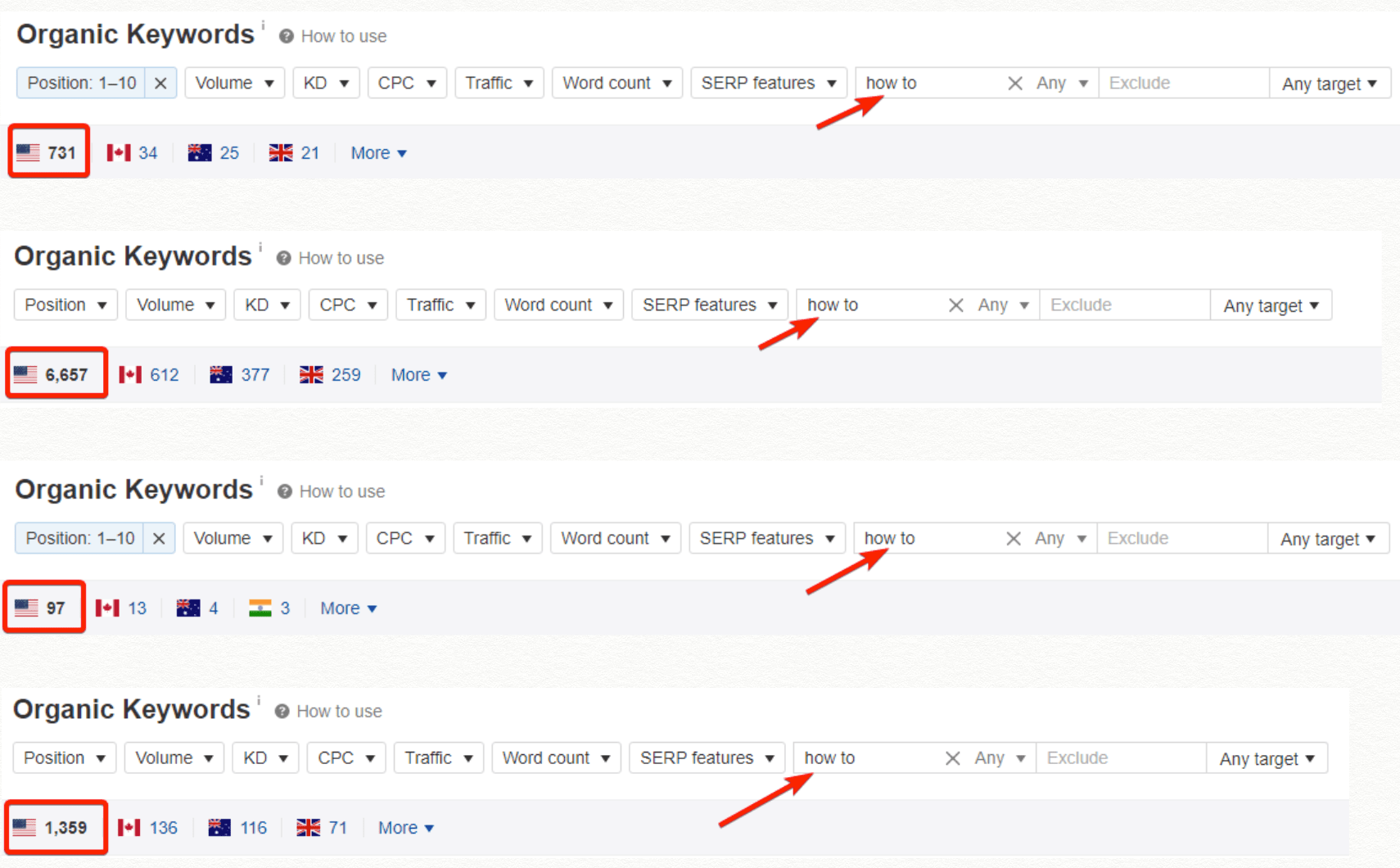

Our study on food blogging sites that were affected in the November 2019 algorithm update, revealed that negative rankings fluctuations for broad, generic queries were a commonality across a large portion of the participants in the study. Another key insight from the study revealed where the food blogs fell short on their content strategy:

“…have a hard time ranking higher for longer, more descriptive queries with a significant number of monthly searches, as well as long-tail keywords that are relatively uncompetitive”

Furthermore, the in-depth study exposed a weakness that can be easily corrected:

“The examined food blogs often manage to rank around 1,000 keywords containing the “how-to” phrase. However, only less than 10% of those keywords rank on Page 1. ”

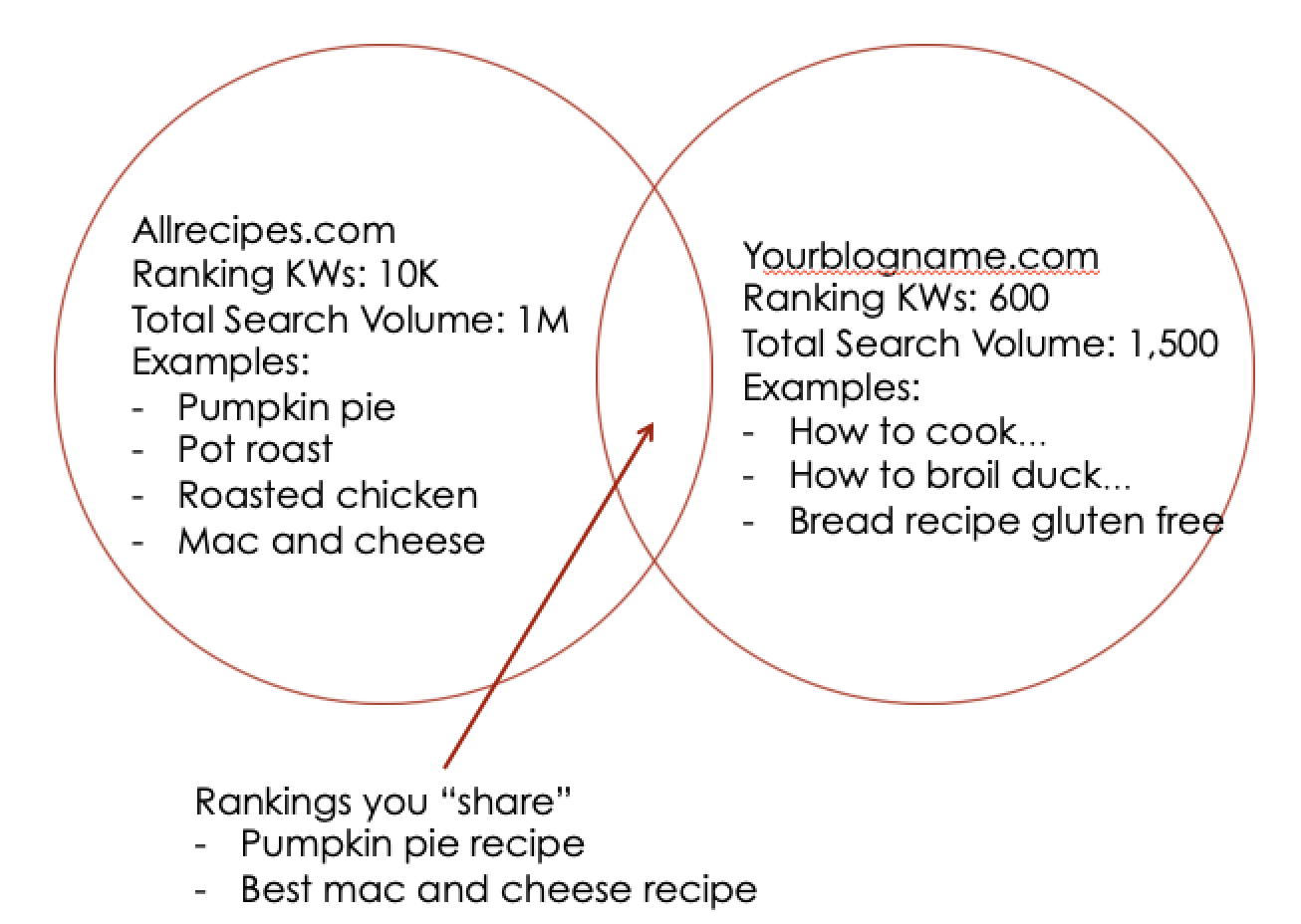

With this insight as to how the November 2019 algorithm shifted rankings, bloggers can begin to reevaluate their approach to content. Using a Venn diagram as an illustrative example, look at it this way; as a small to midsize blog (Yourblogname.com), it’s much more profitable to build out content that ranks for long-tail searches instead of chasing rankings for generic searches that are too broad to rank for.

Blogs that have content that speaks to very specific, long-tail searches have a better chance of capturing and retaining those types of rankings as opposed to a large site which appeals to a broad audience.

Why it’s important to be prepared

- A: as a blogger, you never know when Google is going to change the rules. In fact, no one does.

- B: this means that your blog shouldn’t be wholly dependent on organic traffic coming from generic search terms.

Traffic diversification is a strategic advantage, especially when you’re a site with less than 1,000 pages and limited resources (as in, it’s only you pumping out content not a team of paid writers and SEO savvy individuals!)

If you only write content that targets head terms like “chicken pot pie”, Google will be less likely to match your page to that type of search for two reasons:

- It’s still too broad to determine the user’s intent

- Domains that have hundreds of pages ranking around this term are likely a better bet to serve up to the user

Need to Optimize Your Blog?

We’ve been providing SEO for publishers for many years, it’s one of our many specialties. Whether you have hundreds of posts of just starting out, let’s take a look at your blog and see how we can get you in front of more readers!

Final thoughts

The most proactive way to prepare for future algorithm changes is to prepare what to do without traffic from generic keywords.

Let larger domains have that traffic.

As a small to midsize domain, your best defense is content that ranks for a handful of long-tail searches with a sizable monthly search volume.

Resources

^Searcher Intent: The Overlooked ‘Ranking Factor’ You Should Be Optimizing For by Joshua Hardwick